When designing a board game, it takes a lot of finetuning to get the balance right

and make the game fun to play. This finetuning in turn requires you to play endless

iterations of the game, or does it?

The AI Use Case Game

A while back my colleague Walter

called me up, saying he was looking for an expert in board games to help him out

design our own game.

While I do like to play a board game once in a while I am by no means an expert,

but I like to take on a challenge so I decided to see if I could help out.

Walter already had quite a clear idea of what he wanted to make: a board game where

you take turns to walk around a monolopy-style track and get to try to complete

AI use cases. We discussed for a while, and quickly realised that we would need to

start playing to figure out which concepts do or do not work in a board game.

So we drew the board on a sheet of paper, used python to simulate

dice rolls and took another sheet of paper to keep track of our balances

as we did not have any dice nor game money at the ready.

We quickly realised that playing like this wasn’t particularly fun. And, if we were

to create a well-balanced game we would need to play a lot of games. If only there

was a way to automate that…

So, I decided to write a simulation that could play the game for us. But before

we dive into the simulation, let’s have a look at the end result.

The Game

In the game, you lead a team of data scientists and engineers. Your

goal is to create as much business value as possible

for your company while you and competitors each

finish up to three use cases.

The game is played on a board which looks like this:

Phases of the Game

All players start the game in the ideation field, where the goal is

to come up with a use case. While in this phase, you may pick a new use

case card every turn, and add it to your backlog.

After picking a use case card you may decide to start developing it, in

which case you move your pawn to the "start" of the ideation phase.

You will have to successfully pass the infrastructure, data & ETL,

modelling and productionizing phases in order to complete the use case.

Building a Team

When you are developing a use case, your turn starts with a die roll.

Then, you move your pawn forward by the number of eyes on the die,

offset by your handicap. Your handicap is your team size

minus the desired team size as provided on the use case card: this means

that if your team size is too small, you may actually move backward!

If your team size is more than three people short, you will never be able

to complete a use case.

If you come across one of the yellow line named "team" ,

then you must take a team card from the stack.

These team cards affect your team size,

and may provide you with a new team member, may result in you losing a

team member, or offer you one or more team members in return for a fee.

All players start with two team members, and growing your team during

the game is essential!

But a large team also offers a disadvantage: team members are expensive.

You will need to pay your team members’ salaries at the end of every turn,

even during ideation. If you ever run out of money, you will need to

take a reorganisation card from the stack. This card will provide you

with some extra budget or exempt you from having to pay the salaries for a turn,

but may cost you business value or a team member.

Budget

Each use case comes with a budget; you will receive part of the budget when

starting the use case, and the remainder once you have passed the green

dashed line named "budget" halfway the board.

Action Cards

If you land your pawn on one of the gray fields with an icon, then you

must take an action card of the phase you are in. These action card may

provide you with a benefit, but they may also give you a disadvantage.

This may come in the form of budget, progress (fields on the board),

a number of turns to skip, or in extreme cases force you to abandon the use

case.

Taking the Use Case into Production

Once you pass the finish line you have completed the use case and may

collect the business value associated to it. The next turn you may choose to

start ideation and draw a new use case, or start developing a use case

that is already in your backlog.

The game ends when a player has finished his third use case; the other

players then still get to finish the round. The player who created the

most value wins!

The simulation

When you make a simulation for any kind of game, the implementation of the

game rules is usually the easy part. Modeling the behaviour of players is

much more complicated: they don’t follow strict rules when making decisions.

And if you do make a set of rules that the players use to make their decisions,

then you can have a lot of fun optimising these rules. A few years ago, I did exactly that

for the Risk boardgame.

For this new game the optimal strategy wasn’t my focus, but it was the game itself.

So instead of spending a lot of time on the decisions of the players, I spent more

time making sure the game rules were easily configurable. I made some decisions on

how players react to certain situations, and I assumed that the game would only

become better when people actually put thought into it.

That may sound like a dangerous

assumption, but since the chance element of the game is fairly heavy I think that

the strategy component is not so important.

If you are interested in the implementation, have a

look here.

Optimizing the game

Once the simulation was (mostly) finished, we could start optimizing the game.

Being a data scientist, I wanted to define a loss function and then let

some algorithm find the most optimal game. But it turns out to be difficult

to capture the notion of a "fun" game in a loss function .

So we went with doing the optimization ourselves, looking at multiple aspects

of the game and going with our gut feeling of what is a fun game.

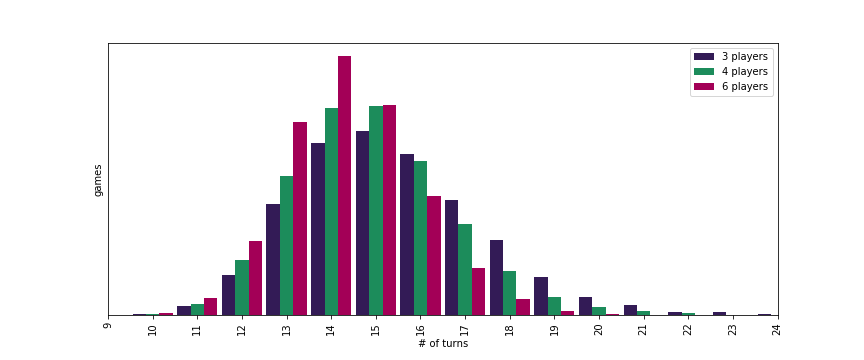

Duration of the Game

Perhaps the most important parameter to optimise was the playing time. No one

likes a game that takes half a day or one that is finished in a single turn.

So we played around with the number of fields on the board, the number of use

cases to complete and the action cards until we were happy with the result.

Of course the expected number of turns varies with the number of players: the

more players, the more likely one of the players will be done after a given

number of turns. In the end, we settled for about 15 turns per game, which would

make the game playable in well under an hour.

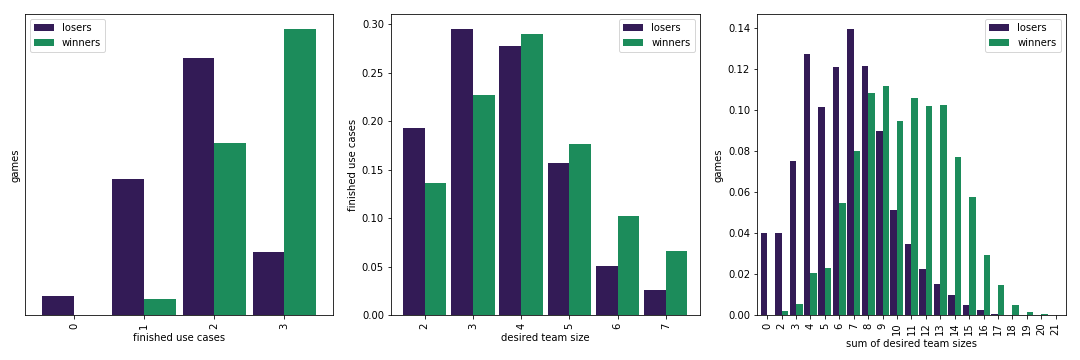

Use Cases

Next up were the use cases, in which we needed to balance the desired team size

and the resulting value.

We wanted the ideal path for a player to be to first develop a simple use case

(of which the desired team size is 2 or 3), then a moderately complicated (4-5), and finally

a complicated use case (6+). If we wouldn’t balance the business value well, it could end

up being a better strategy to finish three simple uses cases as quickly as possible.

Above you see the results after balancing: on the left we see that winners

have often completed three use cases, but it is also possible to win with

only two use cases. That is great: this means players have to balance quickly

finishing three use cases versus finishing some with more value.

In the middle plot we see that winners typically finish use cases with a

higher desired team size, while on the right we see that these winners

typically finished use cases with a sum of desired team size of between 8 and 13.

If you manage to finish one use case in each of the three categories you

would end up with a sum of 12+, which practically means that you won.

That is exactly what we were aiming for!

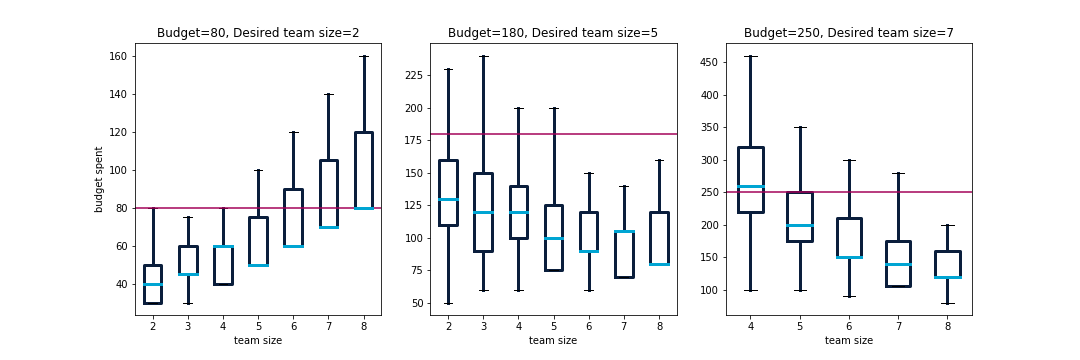

Budget

Also important are the budgets the use cases provide and

the budget that players start with. We want it to be fairly doable

to finish the game without taking any reorganisation cards, but it shouldn’t

be impossible to run out of budget either.

So we had a look at each use case and the expected amount of budget needed

to complete it. This, of course, depends on the team size: the larger the team,

the faster you move but the more expenses you have. Below are a few examples,

ranging from very simple to very complicated. We’ve plotted the total spent

budget while completing the use case for each possible team size.

As you can see, it is very possible to complete each use case as long as

your team size is in the right ballpark. If your team is much too small

or much too large, your budget may run out.

Finalizing the Design

Of course, the rules and the balance of the game isn’t everything that you need.

Walter did a great job with the design of the game, which is equally important

because no one likes to play a game that is visually unappealing. By now we have

several copies of the game, and we’ve had people play it with us at several

occasions. So far the reception has been great… perhaps I should give

it a try once, as I haven’t played the game myself yet. But my computer has

had its fair share with at least a million games.

Want to try the AI Use Case game yourself?

The AI Use Case game is one of the elements of the Data Science for Product Owners training. Next to playing this awesome boardgame, the course will teach you everything you need to know about driving value with data & AI.