New data tools pop up every now and then (e.g. Rapids and Databricks Delta). Adoption of such tools, however, takes time. Both for the framework to get stable and for potential users to position it in their software stack. The question is: which tools will stick?

We’ll try to help you answer that question for a tool called Dask. First released in 2014, the project reached 1.0 status by October 2018. By now the project should be reasonably stable; a good moment to investigate what this tool can do for our customers.

What is Dask

First let’s hear what the authors have to say:

Dask is a flexible library for parallel computing in Python.

Dask is composed of two parts:

- Dynamic task scheduling optimized for computation. This is similar to Airflow, Luigi, Celery, or Make, but optimized for interactive computational workloads.

- “Big Data” collections like parallel arrays, dataframes, and lists that extend common interfaces like NumPy, Pandas, or Python iterators to larger-than-memory or distributed environments. These parallel collections run on top of dynamic task schedulers.

In other words, Dask is a general framework to parallelize data manipulation of both unstructured (Python data structures) and structured (tabular) data. The latter is the main focus as Dask was clearly inspired by more established projects such as Pandas. It is not too far off mark to think of Dask as doing the same things as Pandas, but in parallel.

Pulling this off requires some rethinking of sequential algorithms. As a simple example take the operation of computing the average value of a set of numbers. It is necessary to determine what happens in parallel ("map") and how to combine the outcomes ("reduce"). Each worker should compute two intermediate results, a "sum" and a "count", and communicate these on the main node that produces the end result. Note how simply splitting up the work and applying the sequential algorithm on each worker will not get you there. The main value of a framework like Dask, for structured data manipulation, is to relieve us of the overhead of coming up with and programming these parallel algorithms ourselves.

To keep the learning curve of its framework as gentle as possible, Dask attempts to stay close to home for most data professionals. It does so by leveraging existing packages such as Pandas and NumPy in the PyData ecosystem. It builds upon these packages and resembles their APIs.

A note on "scaling" aka parallelization

Note that scaling does not have to mean distributed among a cluster of computers. Sometimes you want to speed up analytics workloads (through parallelization) on a single machine with multiple CPUs or cores. For example when performing data analysis with custom Python operations in a notebook environment. In such cases Dask provides a welcome abstraction for many common dataframe manipulations.

Alternatives that might Spark your interest

Considering the scope of the Dask project, it feels natural to compare it to Apache Spark, a technology with wide adoption that has proven its value over the past years.

The thought that these projects are quite simliar is echoed by the Dask developers: they dedicated a Help & Reference page to this very topic.

Dask vs. Spark

It’s just Python

Where Spark is build in Scala (with Python support) Dask is coded in Python. It stays closer to the PyData ecosystem by building upon libraries such as NumPy, Pandas and Scikit-Learn. This facilitates debugging as it becomes possible to follow stacktrace all the way into the Dask framework internals. The same thing isn’t really possible in PySpark because most API calls are more or less immediately handed off to Scala. Thus they remain out-of-view of the IDE/ Python debugger. Besides facilitating introspection, native Python is easier to extend and adapt to whatever specific needs you may have.

Maturity

Dask is a younger project, and thus less known and embedded in current software stacks. Most new technologies move through a phase of brittleness / growing pains featuring some quirks or "gotcha’s". Admittedly, during our brief evaluation of Dask, we did find ourselves deciphering the stacktraces of unnamed exceptions in an unfamiliar codebase on a few occasions. To be fair, this is to be expected to some extent and not necessarily a deal breaker. Besides, the average data scientist’s journey to mastering Spark isn’t a walk in the park either. Nevertheless, Spark has been around longer with larger adoption, and consequently most glaring issues have been resolved, either through updates or workarounds, by the community (e.g. on Stackoverflow).

DAG optimization

Both Spark and Dask build an execution plan before computing anything. APIs for computations on structured data are highly declarative: they specify what the result should look like instead of how to compute it. Figuring out the ideal way to perform a distributed computation can be very difficult and depends on many factors related to data distributions and available compute resources. The advantage of declarative APIs is that they can (potentially) outsource much of this puzzle to the computer. This frees the user to work on higher level problems and, if done well, results in better solutions too. Most longstanding relational database implementations are very efficient because they have gone through many iterations of optimization and improvement.

Whereas Spark improves upon the plan through multiple layers of optimization (logical and physical), Dask seems to optimize in a more limited fashion. For example, when a query plan contains a reduction of rows or columns, Spark will schedule this reduction as early as possible ("predicate pushdown"). This is efficient because it avoids processing data that isn’t required by the result. The same thing is possible in Dask but will only happen when explicitly specified by the user.

Async API

Dask provides its users with an async API ("futures API") that integrates with asyncio, a project that enables green threading and is part of the Python standard library. Dask runs in a process separate from the initiating Python process. When submitting a job to the Dask cluster, the main process is I/O bound, making it possible to do something else concurrently. In other words, it is possible let Dask perform some long running calculation without blocking the main thread, while waiting for the result. Although it is possible to submit a non-blocking job using the PySpark CLI, the same thing cannot be done from a Python interpreter.

The Dask tutorials contain a cool demonstration of the advantage of async calls: batched, iterative computations. Computations are performed in parallel within each batch, but individual results are collected as early as possible. Meanwhile a graph is updated constantly, meaning that the figure develops while computing. For a more practical application consider a webservice that requires Dask for servicing some of its incoming requests. Jobs could be submitted to the cluster while the service remains open to new, concurrent connections. This way Dask would integrate well with an async webserver such as Sanic whereas PySpark wouldn’t.

Verdict

In our experience Spark is still more stable and mature, and thus remains our tool of choice. But like we said, it takes time to adopt a new tool and with time our opinion might change.

If you do want to take Dask for a spin then take the following into consideration:

- When possible stick to Pandas or scikit-learn and only move to Dask when necessary, because you don’t want to add complexity/overhead if it is not necessary.

- If you start out with semi- or unstructured data, try to move away from the low-level Dask bag API and move towards the high-level Dask dataframe API as soon as possible. Because of its simpler API you will make less mistakes yet end up with a comparable performance.

- Follow the tutorial we mention below which will explain the above in more detail.

Getting experience

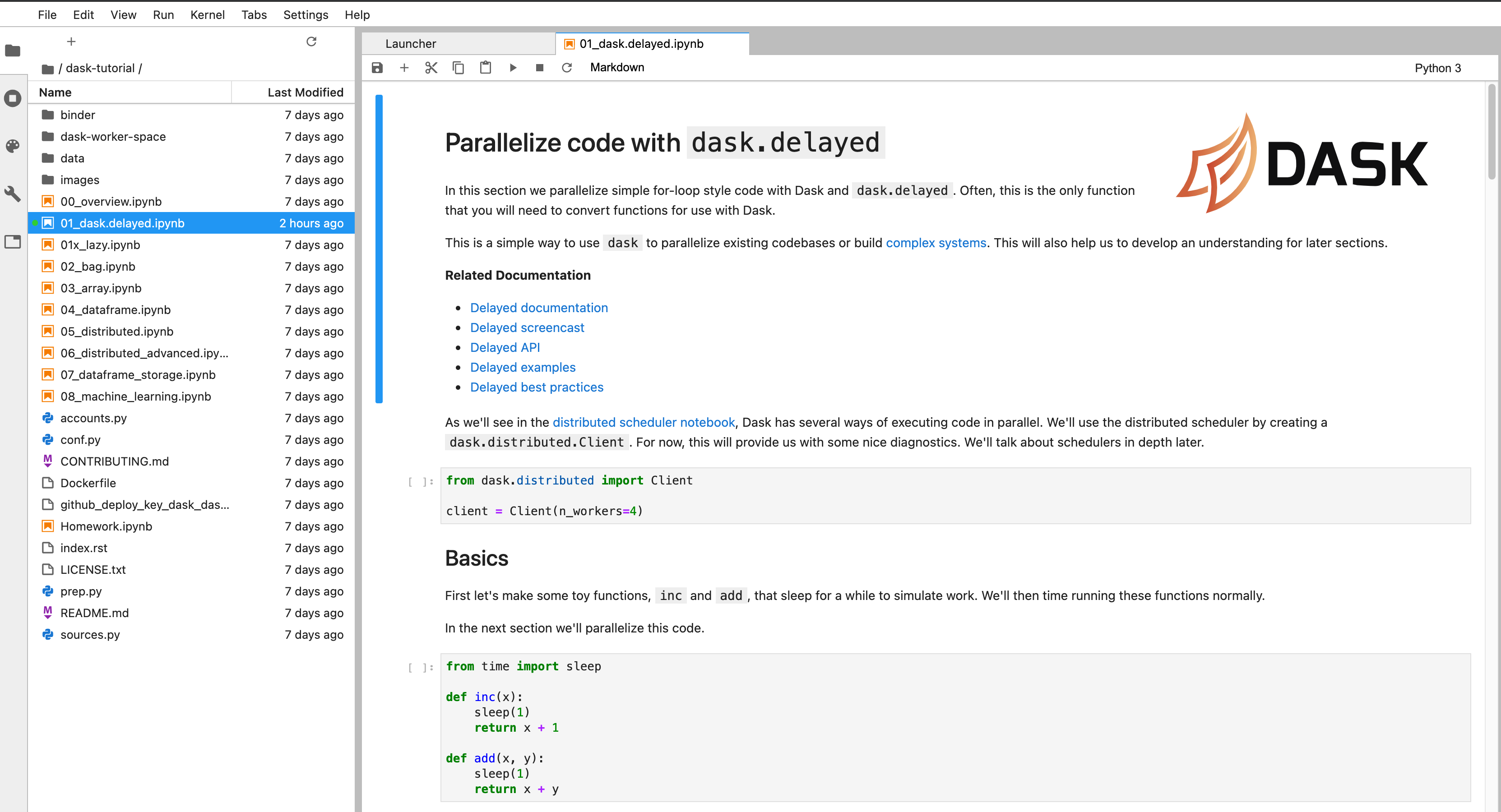

Interested? We can recommend a very elaborate tutorial. You can even use Binder (for free) to launch a completely setup environment including compute in only one click.

The tutorial goes over the different user interfaces that Dask makes available to the user, e.g. Dask bag, Dask array and Dask dataframe. Further, it explains the more low-level user interfaces Delayed and Futures; used when your problem doesn’t fit any of the data collection described above. The tutorial ends with a notebook on Machine Learning using Dask, where they e.g. explain how Dask can parallelize a gridsearch in Scikit-learn.

What do you think?

We are interested in your experience too. Have you used Dask? What did you experience? Feel free to reach out and hopefully we will see you around in the community!